Mastering the 3nf data model for scalable SaaS systems

At its core, a 3NF data model is a way of organizing information to get rid of duplicates and ensure everything is consistent. Think of it as the ultimate Marie Kondo method for your business data—every single piece of information has a specific, logical home, and only one.

Why a 3NF Data Model Is Your Automation Superpower

For anyone running a SaaS or B2B company, a well-built database isn't just some technical footnote; it’s the engine that drives reliable automation and sustainable growth. It’s the difference between a chaotic stockroom and a perfectly labeled warehouse where anyone can find, update, and manage inventory in seconds. That’s the kind of operational clarity a 3NF data model brings to the table.

When your data is a mess, you're essentially running your business on a shaky foundation. This inevitably leads to costly and embarrassing mistakes, like your CRM sending a renewal reminder to a customer who churned last month, all because their status wasn't updated correctly across different spreadsheets or tables.

The Bedrock of Reliable Workflows

A 3NF data model is designed to prevent these exact kinds of problems by enforcing consistency. When a customer's address or subscription plan changes, your team updates it in one single place. That change then automatically ripples through every system, ensuring every automated workflow—from marketing campaigns to invoicing—is running on correct, up-to-the-minute information.

This concept of a single source of truth is what makes your operations hum. It also provides the stable data foundation required for more advanced tools, especially AI-powered systems that are completely dependent on clean, trustworthy data to produce useful results. Getting a handle on how fundamental data models support modern automation suites like the Microsoft Power Platform shows you just how powerful a solid data strategy can be.

A disorganized database is the number one cause of automation failure. It creates a domino effect where one small inconsistency can disrupt an entire workflow, leading to wasted resources and missed opportunities.

How 3NF Transforms Your SaaS Operations

Moving to a 3NF structure delivers tangible benefits that ripple through your entire company. The table below shows just how big the shift can be when moving from a messy, denormalized model to a clean, 3NF-compliant one.

| Operational Area | Problem in Denormalized Model | Solution in 3NF Model |

|---|---|---|

| Data Updates | An employee's name change requires manual updates in 5 different tables, risking errors. | The name is updated once in the Employees table, and all related records reflect the change instantly. |

| Reporting & BI | Reports show conflicting sales numbers because customer data is duplicated and out of sync. | All reports pull from a single, trusted source of truth, ensuring 100% data consistency. |

| Storage Costs | Storing redundant customer and order details across multiple tables inflates database size and cost. | Information is stored only once, drastically reducing storage footprint and associated expenses. |

| System Scalability | Adding a new product feature requires complex and risky changes to multiple, tangled tables. | New tables and relationships can be added cleanly without disrupting the existing data structure. |

Ultimately, a well-organized database gives you the confidence to automate aggressively, knowing your workflows are built on a rock-solid foundation that won't let you down.

More Than Just a Legacy Practice

You might think of database normalization as old-school, but it's a strategic move that pays dividends across the board. Despite the hype around newer data architectures, its core principles remain essential for operational excellence.

In fact, a Coalesce report on 2023 data warehouse trends found that a surprising 47% of organizations still actively use 3NF schemas. This shows just how relevant this time-tested model continues to be for businesses that prioritize data integrity and efficiency.

Building Your Understanding of Database Normalization

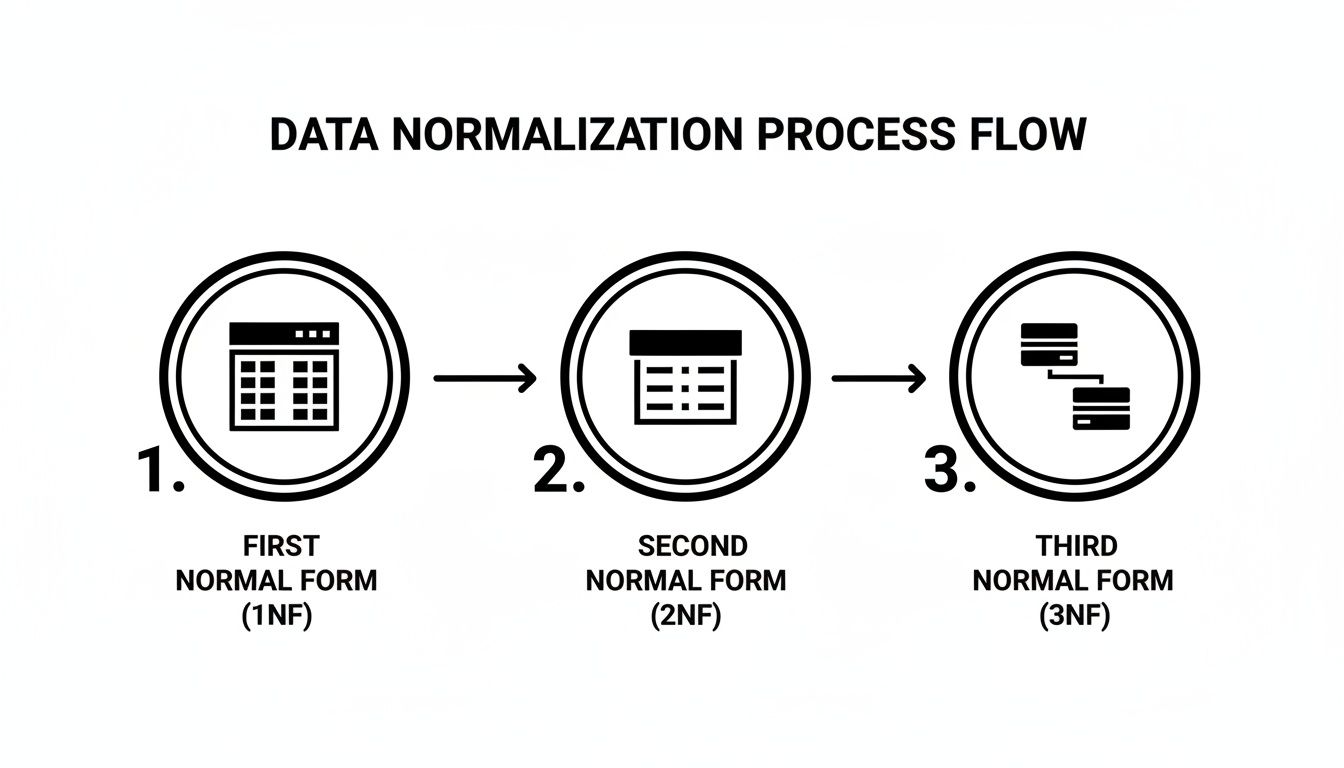

Getting to a clean, efficient database isn’t a single jump; it's a methodical climb. This process, called database normalization, moves your data through progressive stages of organization. Each stage builds directly on the one before it, helping you systematically untangle the data chaos that can cripple your automation workflows and reporting accuracy.

We're going to walk through these stages—First Normal Form (1NF), Second Normal Form (2NF), and finally, the 3NF data model—using real-world business examples. This step-by-step approach doesn't just show you the rules; it reveals why they exist and how they prevent expensive data errors down the line.

Step 1: First Normal Form (1NF)

The first milestone, First Normal Form (1NF), tackles the most common and frustrating database mistake: cramming multiple values into a single cell. Think of a Contacts spreadsheet where one cell under a "Services" column reads "SEO, PPC, Content Marketing." From a data management perspective, that’s a complete nightmare.

To reach 1NF, you have to ensure that every column in every row holds only one, indivisible value. This means you need to break up that messy list into individual rows.

- Before 1NF: A single row for "Client A" has a "Services" cell containing "SEO, PPC."

- After 1NF: You now have two distinct rows for "Client A"—one for the "SEO" service and another for the "PPC" service.

This simple adjustment instantly makes your data searchable, sortable, and truly useful. Without it, you can't even reliably answer a basic question like, "How many clients are using our PPC service?"

Step 2: Second Normal Form (2NF)

Once your tables are in 1NF, it's time to tackle Second Normal Form (2NF). This stage addresses a more subtle problem known as partial dependency. This happens when you have a primary key made up of multiple columns (a composite key), but another column in the table depends on only part of that key.

For instance, let's say you have an OrderItems table where the primary key is a combination of OrderID and ProductID. If you also store ProductName in that same table, you've created a partial dependency. The ProductName is related to the ProductID alone, not the entire OrderID + ProductID combination.

Key Insight: 2NF forces you to ask: "Does this piece of information describe the entire record, or just a part of it?" If the answer is "just a part," it belongs in its own table.

To fix this, you'd move ProductName into a separate Products table, with ProductID as its key. The OrderItems table then just needs the ProductID to link to all the product details. This prevents update headaches; if a product's name changes, you only have to update it in one place, not every single order line it appears on.

Step 3: Third Normal Form (3NF)

Finally, we arrive at the Third Normal Form (3NF). A database is in 3NF if it’s already in 2NF and, crucially, contains no transitive dependencies. A transitive dependency is when a non-key column depends on another non-key column, which in turn depends on the primary key.

Picture an Orders table that includes SalespersonID and also SalespersonManager. The manager isn't determined by the order itself; they're determined by the salesperson. This creates an indirect and risky chain of dependency: OrderID → SalespersonID → SalespersonManager. You can read more about how different forms work together in our comprehensive guide to the database normalization form.

To create a proper 3NF data model, you break this chain. You move the SalespersonManager out of the Orders table and into the Salespersons table where it logically belongs. This simple move ensures that every non-key column in your Orders table depends only on the primary key, giving you a clean, reliable, and automation-ready data structure.

Your Step-By-Step Guide to Building a 3NF Data Model

Theory is one thing, but seeing it in action is another. Let's get our hands dirty and actually build a proper 3NF data model. We’ll start with something most of us have seen: a messy, all-in-one spreadsheet for tracking leads, and transform it into a clean, structured, and automation-ready database.

This walkthrough will guide you through each normalization stage—1NF, 2NF, and finally 3NF—using clear "before" and "after" examples. Think of this not just as a technical exercise, but as a practical blueprint you can apply to your own data, whether it's trapped in a CRM, a project management tool, or a simple Google Sheet.

The "Before" Picture: A Tangled Leads Table

Imagine your sales team uses a single table to track every lead interaction. It probably started out simple enough, but over time, it’s ballooned into a chaotic jumble of information about people, the companies they work for, and the marketing campaigns that brought them to you.

This is what we call an unnormalized table (or 0NF). It's packed with repeating information and is a ticking time bomb for data entry errors.

Unnormalized Leads_Master Table

| LeadID | LeadName | LeadEmail | CompanyName | CompanyHQ | CampaignName | CampaignManager |

|---|---|---|---|---|---|---|

| 101 | Jane Doe | jane@bigcorp.com | Big Corp | New York | Q2 Webinar | Alice |

| 102 | John Smith | john@startup.com | Startup Inc | San Francisco | SEO Outreach | Bob |

| 103 | Sam Jones | sam@bigcorp.com | Big Corp | New York | Q2 Webinar | Alice |

This single-table approach immediately causes headaches. If Big Corp moves its headquarters, how many rows do you have to find and update? You’re hoping your team catches them all, but hope isn't a data strategy. This is a classic data integrity nightmare waiting to happen.

Stage 1: Achieving First Normal Form (1NF)

The very first step is getting to First Normal Form (1NF). The rule is simple: each cell must contain a single, "atomic" value. No comma-separated lists, no multiple entries stuffed into one field.

Looking at our table, we're already compliant. Every cell has just one piece of information. That was easy. So, let’s move on.

Stage 2: Achieving Second Normal Form (2NF)

Next up is Second Normal Form (2NF), which is all about eliminating partial dependencies. A partial dependency happens when a non-key field depends on only part of a composite primary key (a key made of multiple columns).

Our Leads_Master table uses a simple primary key (LeadID), so it doesn't have a composite key. By definition, it can't have partial dependencies, which means it technically already satisfies 2NF. However, just because we pass the test doesn't mean the data is clean. The real culprit is hiding in plain sight, and it’s what 3NF is designed to fix.

Stage 3: Achieving a 3NF Data Model

This is where the major cleanup happens. A table is in Third Normal Form (3NF) if it’s already in 2NF and has no transitive dependencies. A transitive dependency is an indirect relationship where a non-key attribute depends on another non-key attribute, not on the primary key itself.

Let's look at our table again. The LeadID is the key. But:

- The

CampaignManagerisn't determined by theLeadID; they’re determined by theCampaignName. - The

CompanyHQisn't determined by theLeadID; it’s determined by theCompanyName.

These fields are providing facts about other fields, not about the lead. This indirect chain of dependencies is what makes the data so fragile. To achieve a true 3NF data model, we have to break these chains by separating the concepts into their own logical tables.

A great way to remember the goal of 3NF is the old saying: every non-key attribute "must provide a fact about the key, the whole key, and nothing but the key."

To visualize this transformation, let's track the journey of our data from its initial messy state to a well-structured 3NF model.

Example Data Normalization Journey to 3NF

This table shows how we break down the single, problematic Leads_Master table into three distinct, logical tables that are much easier to manage.

| Normalization Stage | Table Structure Example | Key Improvement |

|---|---|---|

| 0NF (Unnormalized) | One large Leads_Master table with all columns |

Data is all in one place but is highly redundant and prone to errors. |

| 1NF/2NF | Same structure, but recognized as compliant | The table has atomic values and no partial dependencies, but transitive dependencies remain. |

| 3NF (Final State) | Three separate tables: Leads, Companies, Campaigns |

Each table focuses on a single entity, eliminating redundancy and update anomalies. |

By following this path, we isolate each concept (Leads, Companies, Campaigns) into its own table, creating a system that is both stable and scalable.

The "After" Picture: The 3NF Transformation

The result of our work is three clean, interconnected tables.

CompaniesTable: All company-specific information lives here.CompanyID (PK) CompanyName CompanyHQ 5001 Big Corp New York 5002 Startup Inc San Francisco CampaignsTable: All campaign details are stored here.CampaignID (PK) CampaignName CampaignManager 801 Q2 Webinar Alice 802 SEO Outreach Bob LeadsTable: Now lean and focused, this table connects to the others using foreign keys (CompanyID,CampaignID).LeadID (PK) LeadName LeadEmail CompanyID (FK) CampaignID (FK) 101 Jane Doe jane@bigcorp.com 5001 801 102 John Smith john@startup.com 5002 802 103 Sam Jones sam@bigcorp.com 5001 801

With this structure, if a company moves its headquarters or a campaign gets a new manager, you only have to make a single update in one place. That's the power of a solid data foundation. For more hands-on scenarios, you can explore another third normal form with example walkthrough on our blog.

Putting 3NF to Work in Your B2B Tech Stack

Theory is one thing, but putting a 3NF data model into practice is where the real value emerges. This isn't just an abstract database concept; it's about how you apply these principles to the tools you rely on every day, whether that's a PostgreSQL backend, a MySQL database, or the architecture of a new CRM. The goal is always the same: create a single source of truth that fuels reliable automation and keeps your data clean.

When you get it right, 3NF means updating a client's details in one place automatically updates it everywhere else. This simple consistency prevents the kind of costly, embarrassing mistakes that erode customer trust—like sending an upsell offer to a customer who just downgraded their plan. That level of operational integrity is non-negotiable.

This flowchart shows how you can get from a messy, flat file to a clean, structured 3NF design.

You can see how data is refined at each step. It starts as a simple spreadsheet (1NF), gets organized into basic tables (2NF), and finally becomes a set of efficiently interconnected tables—the very heart of a 3NF model.

Building a Foundation That Can Scale

For any SaaS company, building systems that can handle growth is everything. A crucial piece of this puzzle, especially in a B2B context, is designing for complex setups like a multi-tenant architecture. In these environments, 3NF isn't just a best practice; it's essential for keeping each tenant's data isolated, consistent, and secure. It prevents data leakage between customers and helps maintain performance as you add more users.

The real ROI of a 3NF model isn't just a tidy database. It's the critical business workflows it protects. Clean data ensures your AI-powered tools provide accurate insights, your automated outreach campaigns hit the right people, and your team spends less time hunting down and fixing preventable errors.

To make this happen, you can focus on a few practical steps:

- Document Everything: Don't skip this. Create clear data dictionaries and diagrams that map out every table, field, and relationship. This documentation becomes your team's guide for troubleshooting and onboarding.

- Monitor Data Integrity: Set up automated checks that enforce referential integrity. Think of these as guardrails for your database, preventing orphaned records and making sure the relationships between tables never break.

- Prioritize a Central Integration Layer: As you'll see in many data integration best practices, establishing a clean, 3NF-compliant "silver" layer in your data warehouse is a game-changer. This becomes the stable foundation that all your analytics and reporting tools can build upon.

Following this approach turns your database from a passive storage container into a strategic asset. By building on a rock-solid data foundation, you get more value from every workflow, automation tool, and AI initiative you invest in.

Knowing When to Bend the Rules with Denormalization

Any experienced data professional will tell you that the rules aren't always absolute. While the 3NF data model is the undisputed champion for keeping transactional systems like your CRM or ERP clean and consistent, it has an Achilles' heel: it can seriously slow down complex reporting. This is where we make a deliberate, strategic decision to bend the rules.

This process is called denormalization. It's crucial to understand that this isn't a mistake or a shortcut born from lazy design—it's a calculated trade-off. You're intentionally adding some redundancy back into your database to make it faster for "read-heavy" tasks like analytics. By doing this, you drastically cut down on the number of complicated table joins needed to pull data, which are notorious performance killers.

Think of it this way: your pristine 3NF database is like the master library archive. Every single fact is stored in one specific place to ensure there are no contradictions. It's perfect for writing, updating, and maintaining the ultimate source of truth. A denormalized database, however, is like the custom-printed executive summary handed out before a big meeting—it pulls all the key points from various sources into one easy-to-read document, built for speed and convenience.

When Performance Trumps Purity

So, when do you make this trade-off? The classic use case for denormalization is in a data warehouse or any database built specifically for analytics. These systems are all about speed and are overwhelmingly "read-heavy," meaning people are constantly pulling data out, not putting it in. Analysts and BI tools are running massive queries, often scanning millions of rows and linking tons of tables to uncover business insights.

Forcing every one of those queries to navigate a perfectly structured 3NF schema with a dozen different joins would be painfully slow. The user experience would be awful, with dashboards taking minutes to load instead of seconds. In a world where self-service BI is now rated as "critical" or "very important" by 60% of organizations, that kind of lag is a non-starter.

Denormalization is a strategic choice to optimize for read performance, especially in analytics. You are consciously sacrificing storage efficiency and update simplicity to gain query speed, which is often the right trade-off for reporting systems.

A Tale of Two Models

This reality naturally leads to a powerful and widely-used approach in modern data architecture: using two different models for two different jobs.

Transactional Systems (OLTP): This is your live, operational database that runs the business day-to-day. It should absolutely stick to a strict 3NF data model. Here, data integrity, consistency, and quick, targeted updates are everything. This is your "source of truth."

Analytical Systems (OLAP): This is your data warehouse or reporting database. It should use a denormalized model, like a star or snowflake schema. The top priority here is blazing-fast querying for business intelligence and analytics.

Data typically flows from the normalized transactional system into the denormalized analytical one through an ETL (Extract, Transform, Load) process. This setup gives you the best of both worlds: rock-solid data integrity for your operations and lightning-fast reporting for your decision-makers. The real expertise lies in knowing which tool to use for which job.

Common Questions About the 3NF Data Model

Okay, we’ve covered the theory. But how does this all play out in the real world? When founders and managers start moving from diagrams on a whiteboard to actual implementation, some very practical questions always come up. It's one thing to know the rules, but it's another to apply them confidently to your own systems.

Let's tackle the most common concerns we hear when building a 3NF data model.

Is 3NF Still Relevant with NoSQL Databases?

Yes, absolutely. It might seem counterintuitive since NoSQL databases are known for their flexibility, but the principles behind 3NF are more important than ever. Think of it less as a rigid set of rules and more as a disciplined way of thinking about your data.

Applying 3NF logic helps you prevent the kind of messy data inconsistencies that can bring a growing B2B application to its knees. When your application logic is simpler because the data is clean, your systems become far more reliable and easier to scale. It doesn't matter what database technology you're using; a messy structure will always cause problems down the road.

The goal of a 3NF data model isn't just to follow rules; it's to create a single source of truth. That principle is just as valuable in a flexible NoSQL environment as it is in a traditional relational database.

Will Moving to 3NF Slow Down My Application?

This is the classic performance question, and the honest answer is: it depends on what you're doing. It all comes down to a trade-off between "write" operations and "read" operations.

For "write" operations (like updating a customer's phone number), 3NF is almost always faster. You're changing that piece of information in exactly one place. It's clean, targeted, and incredibly efficient.

For "read" operations (like running a huge report that pulls from ten different tables), the extra joins required by 3NF can sometimes be slower than reading from a single, wide, denormalized table.

This is precisely why many modern systems use a hybrid approach. They run their live, day-to-day operations on a 3NF database for speed and integrity, but they sync that data to a separate, denormalized data warehouse built for heavy-duty analytics. You get the best of both worlds.

How Can I Check if My Database Is in 3NF?

You don't need a PhD in database administration to spot the most obvious red flags. It really boils down to looking for two specific types of dependencies in your tables.

- Partial Dependencies: Take a look at any tables with a composite primary key (a key made of multiple columns). If you have a field in that table that only depends on part of that key, you've got a problem. This violates 2NF, which is a prerequisite for 3NF.

- Transitive Dependencies: This is the big one for 3NF. Do you have any fields that depend on another non-key field? For instance, if your

Orderstable contains aSalesperson_Regional_Officecolumn, you have a transitive dependency. The office location depends on the salesperson, not directly on the order.

Finding and methodically untangling these dependencies is the core work of normalization. It's how you ensure every piece of data lives in its one, true, logical home, creating that clean and efficient 3NF data model.

At MakeAutomation, we specialize in building these robust data foundations to power your growth. We can help you implement AI and automation frameworks that eliminate manual work and scale your operations. Book a discovery call today to see how we can optimize your workflows and boost your ROI.