Sixth Normal Form: Temporal Data and SaaS Use Cases

Most advice about sixth normal form stops at "don't use it." That's incomplete advice.

If you're building a standard CRUD app with straightforward reads and writes, then yes, 6NF usually creates more pain than value. But modern SaaS systems don't just store current state anymore. They track state changes, trigger automation from field-level updates, preserve audit history, and feed downstream analytics that care about when a fact became true and when it stopped being true.

That changes the conversation.

A B2B platform that needs to answer questions like "what was the customer's risk tier when the workflow fired?" or "which subscription value was active when billing generated this invoice?" is no longer dealing with a simple row-update problem. It's dealing with time-bound facts. In that narrow but important space, sixth normal form becomes less of a classroom concept and more of a precise modeling tool.

Why Sixth Normal Form Is Not Just Academic

Many development groups learn normalization through the practical ladder from 1NF to 3NF, then stop there. That's reasonable. For most operational schemas, 3NF is the right balance of integrity, readability, and developer ergonomics. If your team needs a quick refresher on the earlier stages, this guide to database normalization form fundamentals is a useful baseline.

The problem is that common guidance treats 6NF as if "rarely used" means "never useful." Those aren't the same thing. A pattern can be niche and still be the best answer when the data problem is unusually strict.

Where SaaS systems hit the limit of ordinary normalization

Consider a customer record in a subscription platform. Legal wants an audit trail. RevOps wants to know which plan value existed at the time a renewal workflow ran. Product wants event-driven automations when a single field changes. Data engineering wants a clean historical model instead of reconstructing state from a messy update log.

A conventional normalized table can store the latest value well. It struggles when each attribute has its own timeline.

That's where 6NF becomes interesting. Instead of thinking in terms of "one entity row with many columns," it pushes you toward "one fact per relation." That sounds extreme, because it is. But it maps well to systems where each field can change independently and where the history of that field matters as much as its current value.

Practical rule: If your hardest requirement is current-state retrieval, 6NF is probably the wrong tool. If your hardest requirement is reconstructing exact state at a moment in time, it deserves a serious look.

The real use case is not purity

This isn't about chasing theoretical perfection. It's about choosing a schema that aligns with automation and temporal truth.

Senior engineers usually reject 6NF for the right reasons. Too many joins. Too many tables. Awkward ORM behavior. All true. But those objections mostly apply when you try to make 6NF the center of a general-purpose application schema. In high-change, compliance-heavy, event-aware domains, the cost profile shifts.

Used surgically, sixth normal form can make temporal querying, auditability, and change-driven workflows far cleaner than bolting history tables onto a wide row model.

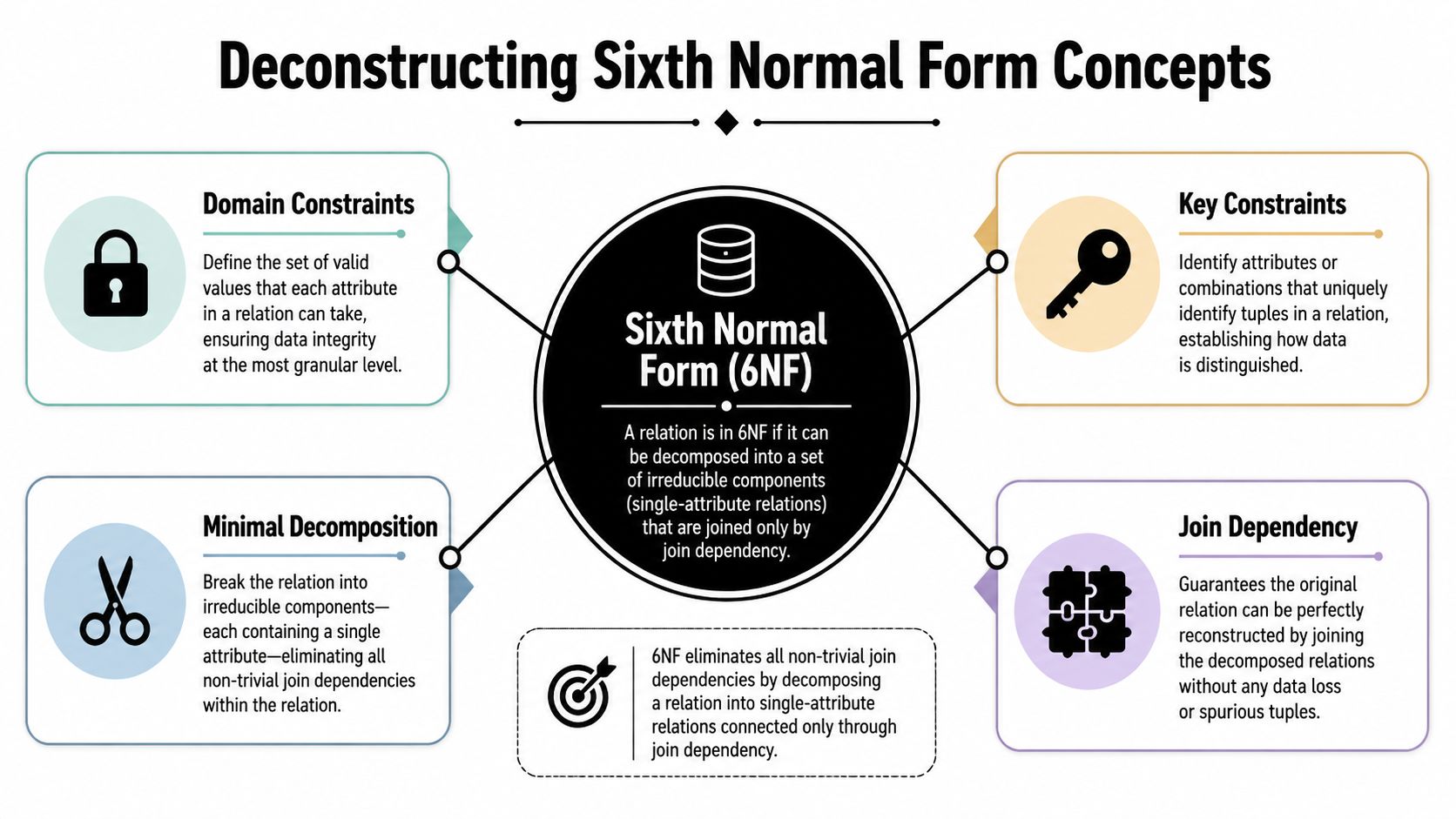

Deconstructing Sixth Normal Form Concepts

Sixth normal form is the point where a relation has been decomposed into irreducible parts. Formally, Christopher J. Date defined 6NF as a state where relation variables are decomposed so each table contains at most a primary key and one non-key attribute, and a relation can only reach 6NF if it is already in 5NF (formal description of sixth normal form).

That definition is accurate, but it isn't how architects usually internalize it.

Think in single facts

Take a business-card style record with these fields:

- customer_id

- legal_name

- billing_email

- plan_tier

- renewal_date

In a normal transactional design, those attributes live together because they describe the same customer. In 6NF, that grouping is treated as too coarse if each attribute is an independent fact with its own lifecycle. You split the row into separate relations so each one states exactly one thing about the entity.

That means a table like:

- customer_legal_name

- customer_billing_email

- customer_plan_tier

- customer_renewal_date

Each table carries the key, plus one attribute.

Why 5NF matters first

6NF isn't an alternative to earlier normalization. It's the far edge of it. You don't jump from a messy operational table straight to 6NF and call it good. The relation must already satisfy stricter relational rules before this last decomposition even makes sense.

The phrase trivial join dependency matters here. In plain English, once you're in 6NF, the only valid way to describe join dependencies is the obvious one. You're no longer hiding compound facts inside a wider relation. Each relation is already as reduced as it can reasonably be.

A useful mental model is this: 3NF removes transitive dependencies, 5NF removes complex join anomalies, and 6NF asks whether any remaining table still bundles facts that could stand on their own.

Why this feels excessive in application development

Because most application development optimizes for row assembly, not fact isolation.

ORMs like Prisma, TypeORM, Sequelize, Hibernate, and Entity Framework work best when a model maps to a table that looks like a cohesive object. A 6NF design breaks that expectation. The database sees precision. The application layer often sees fragmentation.

That doesn't make 6NF wrong. It means the model is optimized for a different priority.

A good way to see the contrast is to look at industries that spend serious effort on data shape. In regulated reporting and analytics-heavy systems, teams often care significantly about atomized, well-structured facts. This example on structuring FDIC call reports for clarity is a useful reminder that data modeling choices are often driven by downstream interpretation, not just storage convenience.

What 6NF is really buying you

At a practical level, 6NF buys four things:

- Attribute independence so one field can evolve without dragging unrelated fields with it

- Temporal precision because each fact can carry its own validity boundaries

- Cleaner audit semantics since each row states one thing that became true

- Composable history where you can reconstruct state from atomic assertions

Those benefits are narrow. They're also real.

From Theory to Schema a Step-by-Step Example

Let's make this concrete with a familiar SaaS table.

Start with a typical 3NF-style profile table:

CREATE TABLE UserProfile (

UserID BIGINT PRIMARY KEY,

UserName VARCHAR(255) NOT NULL,

Email VARCHAR(255) NOT NULL,

SubscriptionLevel VARCHAR(100),

LastLoginDate TIMESTAMP

);

This is easy to understand. It also bundles several distinct facts into one row. That's fine for most apps. But if each attribute changes on its own cadence, and you need to retain clean field-level history, this shape starts to work against you.

Step 1 split the facts apart

A 6NF decomposition turns each non-key attribute into its own relation.

CREATE TABLE UserProfile_UserName (

UserID BIGINT PRIMARY KEY,

UserName VARCHAR(255) NOT NULL,

FOREIGN KEY (UserID) REFERENCES UserProfile_Base(UserID)

);

CREATE TABLE UserProfile_Email (

UserID BIGINT PRIMARY KEY,

Email VARCHAR(255) NOT NULL,

FOREIGN KEY (UserID) REFERENCES UserProfile_Base(UserID)

);

CREATE TABLE UserProfile_SubscriptionLevel (

UserID BIGINT PRIMARY KEY,

SubscriptionLevel VARCHAR(100),

FOREIGN KEY (UserID) REFERENCES UserProfile_Base(UserID)

);

CREATE TABLE UserProfile_LastLoginDate (

UserID BIGINT PRIMARY KEY,

LastLoginDate TIMESTAMP,

FOREIGN KEY (UserID) REFERENCES UserProfile_Base(UserID)

);

CREATE TABLE UserProfile_Base (

UserID BIGINT PRIMARY KEY

);

The base table holds the entity identity. Each attribute table holds one fact.

Many engineers stop at this point and correctly observe that the schema has become significantly more difficult to read. That's the main cost of 6NF. The decomposition is not subtle. A relation with five non-key attributes would decompose into five separate tables, each requiring joins for retrieval. That's part of the formal trade-off already noted in the earlier section.

Step 2 compare the before and after shape

| Schema Transformation from 3NF to 6NF | |

|---|---|

| 3NF Structure (UserProfile Table) | 6NF Structure (Decomposed Tables) |

UserProfile(UserID, UserName, Email, SubscriptionLevel, LastLoginDate) |

UserProfile_Base(UserID) |

| One row contains all profile facts | UserProfile_UserName(UserID, UserName) |

| Simple reads for current state | UserProfile_Email(UserID, Email) |

| Updates overwrite prior values unless history is added separately | UserProfile_SubscriptionLevel(UserID, SubscriptionLevel) |

| Natural fit for ORM entity models | UserProfile_LastLoginDate(UserID, LastLoginDate) |

Step 3 add temporal validity where 6NF becomes useful

The payoff appears when you introduce validity ranges.

CREATE TABLE UserProfile_SubscriptionLevel_History (

UserID BIGINT NOT NULL,

ValidFrom TIMESTAMP NOT NULL,

ValidTo TIMESTAMP,

SubscriptionLevel VARCHAR(100) NOT NULL,

PRIMARY KEY (UserID, ValidFrom)

);

CREATE TABLE UserProfile_Email_History (

UserID BIGINT NOT NULL,

ValidFrom TIMESTAMP NOT NULL,

ValidTo TIMESTAMP,

Email VARCHAR(255) NOT NULL,

PRIMARY KEY (UserID, ValidFrom)

);

Now each attribute has its own history, independent of the others. Email can change without forcing a version update on subscription level. Subscription level can change without touching last login. That's the practical elegance people miss when they dismiss sixth normal form too quickly.

If you're storing "what changed, when it changed, and what was true at the time," 6NF aligns the schema with the question.

Step 4 reconstruct state intentionally

To read current state, you join the latest valid rows.

SELECT

b.UserID,

n.UserName,

e.Email,

s.SubscriptionLevel,

l.LastLoginDate

FROM UserProfile_Base b

LEFT JOIN UserProfile_UserName n ON b.UserID = n.UserID

LEFT JOIN UserProfile_Email e ON b.UserID = e.UserID

LEFT JOIN UserProfile_SubscriptionLevel s ON b.UserID = s.UserID

LEFT JOIN UserProfile_LastLoginDate l ON b.UserID = l.UserID;

For point-in-time state, you join against history tables filtered by a target timestamp. That query is more complex, but the complexity is honest. The schema is expressing that time is part of the data, not an afterthought.

Where this pattern works best

This decomposition works well when:

- Each attribute changes independently and you care about preserving that independence

- Automations trigger from field changes rather than whole-record updates

- Auditors or analysts need exact historical truth

- You can hide assembly complexity behind views, materialized views, or service-layer query logic

It works poorly when your application mostly needs "load row, update row, save row" behavior and doesn't care much about temporal precision.

The Hidden Performance and Storage Trade-Offs

The obvious drawback of 6NF is join overhead. Reads become reconstruction jobs. Query authors must know how facts are distributed. Dashboard queries and ad hoc SQL can get ugly fast.

That criticism is valid. It just isn't the whole picture.

What gets worse

When you decompose aggressively, the database does more work to reassemble current state. That creates several predictable problems:

- Query complexity rises because developers write more joins or rely on generated views

- ORM impedance gets worse because object models don't map cleanly to fact tables

- Operational reads suffer first especially in request paths that need many attributes at once

- Debugging can slow down because "one record" is now many rows across many relations

If you run a high-transaction application with broad record fetches, 6NF in the hot path is usually a mistake.

What gets better

The less discussed benefit is that 6NF can be excellent for sparse, irregular, or independently changing attributes.

A wide profile table often contains optional columns, semi-populated enrichment fields, and lifecycle data that only applies to some accounts. In a decomposed model, absent facts don't need placeholder values. You store only what exists. That can clean up semantics and reduce the awkwardness of null-heavy schemas.

Another advantage is write precision. Updating one attribute means touching one fact table or adding one new temporal row. That can simplify change capture and lower the blast radius of a write.

Operational insight: A system that writes tiny, isolated facts can be easier to reason about than one that repeatedly mutates a wide row with mixed business meaning.

This is also why 6NF often fits naturally beside event-based architectures. If you're already modeling state transitions as discrete facts, the schema can mirror that idea. Teams exploring event sourcing patterns in JavaScript systems often find that attribute-level persistence and append-oriented history lead to cleaner downstream automation.

The architectural compromise that usually wins

Most teams shouldn't choose between "all 3NF" and "all 6NF." They should split workloads.

Use a highly normalized or temporal core where correctness matters. Then project that data into read models that are easier for APIs, dashboards, or warehouse consumers. Those read models might be SQL views, materialized views, cache tables, or denormalized analytical structures.

That hybrid approach respects the biggest truth about sixth normal form. Its write-time precision is often better than its read-time ergonomics.

When to Use 6NF in Real-World Automation Workflows

The best modern use of sixth normal form is not "normalize everything." It's "normalize the parts of the business that change independently and must remain historically exact."

That is why 6NF has gained relevance in specialized domains like temporal databases and data warehousing, where tracking changes over time can outweigh the performance concerns common in standard business applications.

Temporal customer data

In SaaS, customer records are rarely static. Plan tier changes. Contract owner changes. Compliance status changes. Support priority changes. If those values drive billing, routing, entitlements, or automation, then "latest row wins" is often not enough.

A 6NF-style model lets each attribute carry its own timeline. That matters when operations teams ask questions such as:

- Which plan value was active when the renewal task fired?

- What email was on file when a notice was sent?

- Which account status triggered the escalation workflow?

A conventional history table can answer some of this. It gets messy when different fields change at different times and need different retention behavior.

Event stores and automation triggers

Automation platforms work best when changes are explicit. A workflow doesn't want vague record churn. It wants a fact like "customer risk_tier changed from medium to high" or "invoice_status became overdue."

6NF complements that style because the schema already treats each attribute as an independent assertion. That makes it easier to generate reliable change feeds, audit logs, and replayable state transitions.

This is especially useful in systems where humans keep trying to use spreadsheets as operational databases. Teams evaluating tools that replace spreadsheets for tutors run into a similar issue at a smaller scale. Once each student, booking, note, or billing field starts changing on its own timeline, row-oriented editing becomes fragile. Structured, fact-based data models become easier to automate than ad hoc sheets.

In automation-heavy systems, the most important row is often not the current row. It's the row that proves why the system took an action.

Warehousing and history-first analytics

6NF also fits selected warehousing scenarios. Not every warehouse wants denormalization everywhere. Some teams maintain a highly structured historical layer before projecting data into marts or reporting views.

That approach works well when analysts need to reconstruct business state at arbitrary timestamps, validate compliance history, or feed downstream rules engines. The normalized historical layer becomes the system of record, while friendlier analytical views sit on top.

Where not to use it

6NF is usually the wrong answer for:

- Simple transactional backends where records are read whole and history is secondary

- Low-complexity admin apps that mostly need forms and CRUD

- ORM-centric codebases where developer velocity depends on direct entity mapping

- Latency-sensitive endpoints that fetch many attributes in a single request path

The pattern shines when the business asks for precise temporal truth, replayability, and field-driven automation. Outside that space, it's easy to overengineer.

Practical Strategies for Adopting 6NF Principles

A full migration to sixth normal form is rarely justified. The practical move is to apply its principles only where your system benefits from atomic, time-aware facts.

Start with one problem area

Good candidates include:

- Attribute history for compliance such as status, consent, or risk fields

- Workflow-triggered data where individual field changes launch automations

- Reference data with independent timelines such as pricing, entitlements, or assignments

Leave the rest of the application in a conventional normalized model.

Build a hybrid architecture

Use 6NF-style tables as the canonical write model for a narrow domain. Then expose simpler read models through views, projections, or service-layer DTOs. That protects your application code from table explosion while preserving temporal precision underneath.

For teams building application services in JavaScript or TypeScript, this works best when the persistence layer is explicit. A straightforward guide to database access patterns in Node.js applications can help frame where to place query assembly, projections, and transaction boundaries.

Don't confuse it with EAV

Entity-Attribute-Value often appears as a shortcut to "flexible facts." It isn't the same as 6NF.

A 6NF design preserves relational structure, known domains, and meaningful constraints. EAV trades much of that safety for open-ended flexibility. If you need strict history, audited semantics, and reliable query behavior, EAV usually creates more cleanup work later.

Use 6NF when the attributes are known and important. Use looser patterns only when the schema itself is genuinely unpredictable.

Frequently Asked Questions about 6NF

Is sixth normal form the same as EAV

No. They can look similar from a distance because both avoid wide tables. But 6NF keeps attributes in structured, constrained relations. EAV collapses many attributes into a generic name-value pattern, which usually weakens validation, typing, and query clarity.

Is 6NF always slow

Not always. It is commonly slower for broad reads because it needs more joins and more reconstruction. But "slow" depends on workload shape, indexing, caching, projection strategy, and whether you're querying a write model or a read model. The practical mistake is putting raw 6NF tables directly behind endpoints that need assembled records on every request.

Does 6NF matter outside relational databases

Yes, as a design principle. The core idea is to represent facts as atomic, independently versioned assertions. Document databases, event stores, and stream processors often use different mechanics, but the same reasoning applies when you separate one changing fact from another and preserve when each became true.

Should a SaaS team use 6NF for the whole product database

Usually not. Most SaaS products need a blend of models. Keep the majority of operational data in a simpler normalized design. Use 6NF selectively for temporal, auditable, automation-heavy areas where field-level history is a first-class requirement.

What's the strongest signal that 6NF might help

You keep getting asked point-in-time questions your current schema answers poorly. If engineers are stitching together audit tables, update logs, and guessed timestamps just to explain what the system believed at a past moment, your model probably isn't expressing time cleanly enough.

If your team is deciding where temporal modeling, event-driven workflows, and automation architecture should live in the stack, MakeAutomation can help design the data flows, projections, and operational logic that turn complex system behavior into something maintainable.